Towards a Sustainable Fact-checking Model

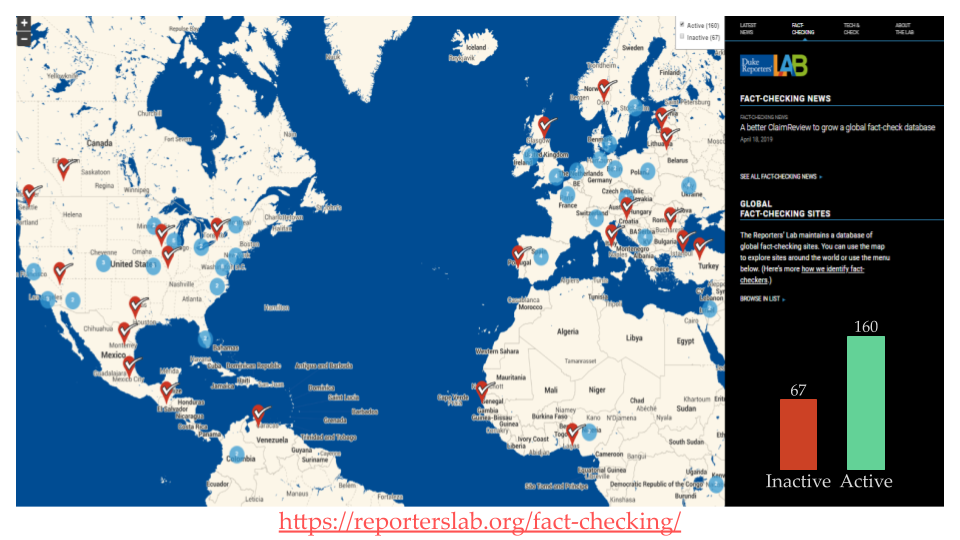

About 33% of all the fact-checking platforms are inactive because they couldn't sustain following the traditional operation model. Fact-checking is tied to impartial service

and the platforms cannot take support from partisan funding sources. Also, the need seems to connected with election cycles. Moreover, the fact-checking process is labor intensive

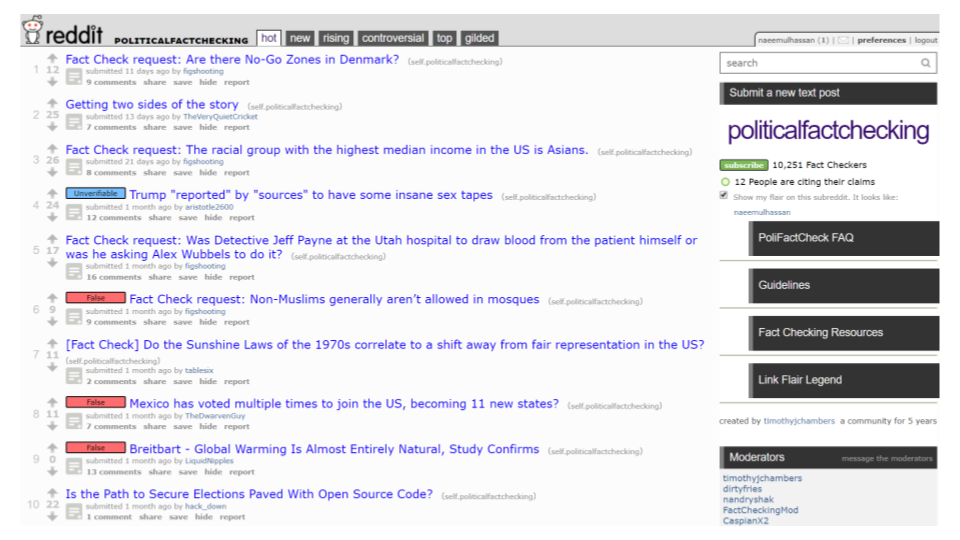

and less scalable. Many professional fact-checkers are suspicious of the idea of crowdsourced fact-checking in which users verify factual claims.

Professionals often claim that users lack required skills, and are biased. In this project, we argue that contribution of crowds to fact-checking is essential

in the networked media ecosystem where information is abundant and rumors spread like wildfire with resources for investigative

journalism steadily plummeting. A sustainable model for

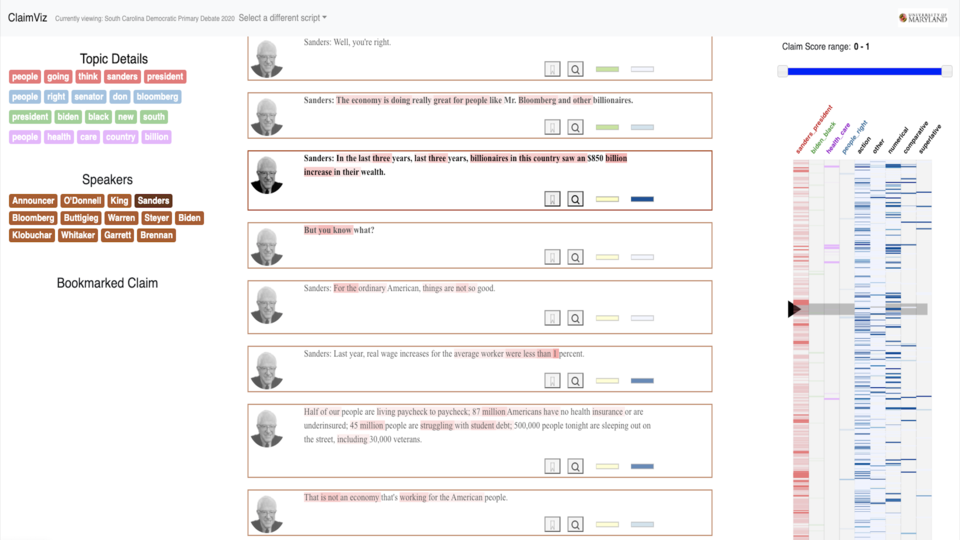

fact-checking platforms would consist of crowds, professional factcheckers, and automated assisting tools. Crowds can perform many

mundane but important tasks under the guidance of professionals while programmers build tools to find credible sources and

make sense of a large amount of user inputs. Crowds can also help

better identify facts that people care about and identify sources

that may lack credibility but still are popular. Professionals can

play the 'moderator' and 'seminar leader' role in this process.

The purpose of this project is to explore such a crowdsourced factchecking model where users check facts under the guidance of

moderators.